New Z-code Mixture of Experts models work on quality, proficiency in Translator and Azure AI

Microsoft is making moves up to Translator and other Azure AI administrations fueled by another group of man-made consciousness models its specialists have created called Z-code, which offer the sort of execution and quality advantages that other huge scope language models have yet can be run significantly more proficiently.

"We want to help everybody and each association in the world to convey better, and to accomplish that objective there are truly two significant aspects - we believe the nature of interpretations should be pretty much as great as could be expected and we need to help whatever number dialects as would be prudent," said Xuedong Huang, Microsoft specialized individual and Azure AI boss innovation official.

Click here to access link BuyNow

Z-code exploits shared etymological components across numerous dialects through move gaining - which applies information starting with one errand then onto the next related task - to work on quality for machine interpretation and other language getting assignments. It likewise expands those abilities past the most widely recognized dialects across the globe to underrepresented dialects that have less accessible preparation information.

"With Z-code we are truly gaining astonishing headway since we are utilizing both exchange gaining and perform multiple tasks gaining from monolingual and multilingual information to make a best in class language model that we accept has the best blend of value, execution and productivity that we can give to our clients," Huang said.

These models utilize a meager "Combination of Experts" move toward that is more effective to run since it just requirements to draw in a piece of the model to finish a job, rather than different designs that need to enact a whole AI model to run each solicitation. This design permits gigantic scope in the quantity of model boundaries while keeping how much register steady.

To place these models underway, Microsoft is utilizing NVIDIA GPUs and Triton Inference Server to send and scale them proficiently for elite execution induction.

Microsoft has as of late sent Z-code models to further develop normal language understanding assignments, for example, name substance acknowledgment, message synopsis, custom message characterization and key expression extraction across its Azure AI administrations. Yet, this is whenever an organization first has freely exhibited that it can utilize this new class of Mixture of Experts models to drive machine interpretation items.

The new Z-code-based interpretation model is presently accessible, by greeting at first, to clients involving archive interpretation in Translator, a Microsoft Azure Cognitive Service which is a piece of Azure AI.

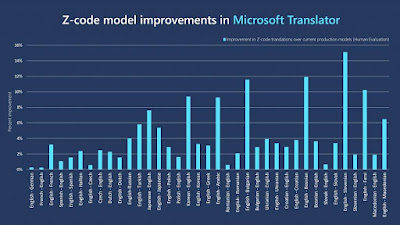

Microsoft's Z-code models reliably further developed interpretation quality over current creation models, as indicated by normal industry measurements. Conversely, with normal multilingual exchange learning draws near, which ordinarily show AI quality additions in dialects that have less immediate interpretation models accessible for preparing, the Z-code Mixture of Experts models show steady gains even in the biggest dialects.

Human evaluators in a visually impaired test authorized by Microsoft found that the Z-code Mixture of Experts models further developed interpretations across dialects, with a normal addition of 4%. For example, the models further developed English to French interpretations by 3.2 %, English to Turkish by 5.8 %, Japanese to English by 7.6%, English to Arabic by 9.3% and English to Slovenian by 15%.

Making all the more impressive and integrative AI frameworks

Z-code is essential for Microsoft's bigger XYZ-code drive that looks to consolidate models for message, vision, sound and different dialects to make all the more impressive and integrative AI frameworks that can talk, hear, see and comprehend individuals better.

Throughout the course of recent years, Microsoft has created models that have matched human execution in conversational discourse acknowledgment, machine interpretation, picture inscribing, SuperGLUE normal language getting it and rational inquiry responding to. These leap forwards give the establishment to acknowledge more aggressive AI frameworks that can accomplish multisensory and multilingual discovering that is nearer to how individuals learn and comprehend, Huang said.

"Those are the pieces, the structure obstructs that we are utilizing to fabricate a genuinely separated knowledge… and to shape creation frameworks that are cost proficient," Huang said.

Z-code models were created as a feature of Microsoft's AI at Scale and Turing drives, which look to foster enormous models that are pretrained on immense measures of literary information to get subtleties of language - (READNOW)which can be incorporated in various Microsoft items and furthermore made accessible to clients for their own purposes.

A similar hidden model can be adjusted to perform different language understanding assignments, for example, interpreting between dialects, summing up a discourse, offering ways of finishing a sentence or creating recommended tweets, rather than creating separate models for every one of those restricted purposes.

"We want to help everybody and each association in the world to convey better, and to accomplish that objective there are truly two significant aspects - we believe the nature of interpretations should be just about as great as could be expected and we need to help however many dialects as would be prudent."

A significant number of these language models, nonetheless, are huge to the point that incorporating them into true products can challenge. Yet, Z-code Mixture of Experts models' known as "scanty," and that implies that they just initiate a small part of the model's boundaries to play out a singular errand, instead of drawing in the entire model without fail.

That makes them significantly more expense proficient to run, similarly that it's less expensive and more effective to just hotness your home in winter during the hours of day that you really want it and in the spaces that you consistently use, instead of keeping a heater running to the max constantly.

Microsoft scientists worked together intimately with NVIDIA to send Z-code Mixture of Experts models underway interestingly, and on NVIDIA GPUs. Utilizing the NVIDIA Triton Inference Server, they had the option to convey these models utilizing a more productive runtime that utilized CUTLASS and FasterTransformer to improve these new sorts of models. The new runtime had the option to accomplish up to a 27x speedup over non-upgraded GPU runtimes.

The Z-code group additionally worked intimately with Microsoft DeepSpeed scientists to figure out how to proficiently prepare gigantic Mixture of Experts models, for example, Z-code, as well as more unobtrusively measured Z-code models for creation situations.

"We have had the option to construct this one model that can cover a great deal of dialects and serve different undertakings from rundown to message age to interpretation and be valuable for some other Microsoft groups," said Hany Hassan Awadalla, Microsoft head analyst and examination supervisor who helps lead improvement of Z-code models for Translator and other Azure Cognitive Services.

"So we are attempting to spread this across the organization and decrease their arrangement costs, which is the vision of AI at Scale by and large," he said.

Coordinating examination into client items

The Z-code models are at first being utilized to support Translator's archive interpretation highlights, which permit clients to decipher whole Word records, PDFs, PowerPoint introductions or different reports into new dialects with all the organizing saved.

Unique sentence Read more

Human interpretation Read more

Past machine interpretation Read more

Further developed Z-code Mixture of Experts model interpretation

Komisija u razumnom roku sažetke dostavlja Sekretarijatu ICCAT-a.

The Commission will advance the outlines to the ICCAT Secretariat inside a sensible time of time.

Within a sensible season of time, the Commission presents the rundown to the ICCAT Secretariat.

The Commission will send the synopses to the ICCAT Secretariat inside a sensible timeframe.

Мисля, че трябва да бъдем внимателни, за да облекчим производителите, дистрибуторите и търговците на дребно.

I think we must be mindful so as to facilitate the weight for makers, merchants and retailers.

I think we should be mindful so as to ease producers, wholesalers and retailers.

I think we should be mindful so as to make it simpler for makers, merchants and retailers.

Microsoft has fostered another class of AI Mixture of Experts models called Z-code that are helping precision in Translator, an Azure Cognitive Service, as displayed in these when models.

That is on the grounds that Z-code runs most productively when it has clusters of sentences to interpret without a moment's delay, and report interpretation loans itself well to that assignment, said Vishal Chowdhary, accomplice improvement chief of Translator who drove endeavors to transform an examination model into something that could be conveyed in genuine creation situations and made accessible to clients.

Beforehand, Translator required 20 separate models to decipher between 10 dialects: one for English to French, French to English, English to Macedonian, Macedonian to English, etc.

Presently, one single Z-code creation model can make an interpretation of each of the 10 dialects to and from English, taking out the requirement for a long time. Bigger examination Z-code models have had the option to decipher straightforwardly between 101 dialects in 10,000 bearings, without going through English first.

Organizations with worldwide tasks in numerous business sectors frequently need a method for further developing correspondence across divisions with representatives that communicate in a wide range of dialects. The present AI models need colossal interpretation informational collections with lingos for preparing, and there may not be an adequate number of information for every one of the ideal dialects and vernaculars, especially in more modest business sectors.

The capacity to share information across various dialects empowers Z-code to deliver more precise outcomes for underrepresented dialects that don't have an enormous number of interpretation

assets just, Huang said.

"The 107 dialects we as of now backing could cover what's spoken at the Olympics or the United Nations," Huang said. "However, there are 7,000 dialects spoken all over the planet and there are a ton of little networks we can't as yet uphold. We need to be completely multilingual with our AI on the grounds that we want to serve each resident in the world."